Minors are increasingly exposed to the maelstrom of social networks . Large technology companies set age limitations to access them, but these virtual barriers are not enough in many cases , since children and adolescents know well how to deceive verification systems.

What does the law say? In reality, each country has different legislation, although in general most social networks have a minimum age to be able to access them . In the case of Spain, the age at which minors can have their own account without parental supervision and consent is 14, according to Royal Decree 1720/2007, of December 21, which approves the Regulations for the development of Organic Law 15/1999, of December 13, on the protection of personal data.

“The data of those over fourteen years of age may be processed with their consent, except in those cases in which the Law requires the assistance of the holders of parental authority or guardianship for its provision. In the case of minors under 14 years of age, the consent of the parents or guardians will be required ”, establishes the norm.

This is how the new ‘curfew’ works in TikTok notifications: what it is and which users it affects

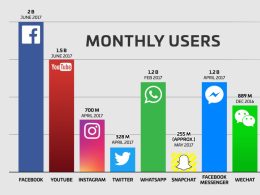

However, in some cases, technology companies mark other minimums: down to 13 years in the case of TikTok, Twitter, WhatsApp, Snapchat , Tumblr or Reddit, and also in others such as Facebook, Instagram, YouTube or Flickr as long as parents or guardians give permission.

These platforms warn in their rules of use that they could eliminate profiles that violate the legislation on data protection in minors , although the reality is that it is not always checked if the user really meets the minimum age required unless the account violates any other term of the conditions or is reported by another user.

Do minors cheat with their age?

In the end, lying to a platform is quite simple, so once minors have access to the Internet and mobile devices without excessive parental control, creating an account on Instagram, Facebook or TikTok is not difficult. They just have to lie their age and put a false name .

We talked about this precisely recently in the TikTok environment . In the first place and as mentioned above, if we talk about rules and allowed ages, no child under 13 should be using the app , since the company’s conditions do not allow it. But the reality is very different.

In its latest transparency report, TikTok acknowledges that it deleted a total of 7,263,952 accounts for being “suspected” of belonging to minors of the allowed age only in the first three months of the year. But beware: there is no way to know exactly how many users under 13 years of age actually use the service, these are accounts in which users voluntarily entered their date of birth, identifying themselves as under 12 years of age when registering. Many others are not so sincere.

TikTok has stressed on numerous occasions that it has a service so that minor users can register on a ‘special version’ of the platform. But, of course, nobody wants to sit at the children’s table to eat when you are about to start your adolescence .

Putting barriers in place is increasingly necessary if we look at data such as that, according to an INE survey, 69.5% of the Spanish population aged 10 to 15 has a mobile phone . If we open that fork to the age of majority, the percentage goes up to 90%. And other reports indicate that an alarming 68% of those under the age of 10 to 12 have social networks. Thus, technology companies are putting the batteries and are tightening measures to have greater control over its use by children and adolescents .

For example, YouTube has recently asked those users that it considers minors and who are trying to access inappropriate content for their age for a photo of their ID or credit card . Instagram, for its part, has announced that to verify that the teenager who opens an account on the social network is over 14 years old, it is developing new technologies based on machine learning, a method that it also uses on Facebook. In addition, practically all of them allow some type of parental control.

On some occasions, to try to cover that arduous need of the little ones to also be in the digital world, social networks propose ‘intermediate’ solutions, something like virtual worlds only for minors in which they are safer against problems such as the cyberstalking or exposure.

For example, we have YouTube Kids, a standalone app with content geared toward children between the ages of 2 and 8 . The content of the application can be configured for three groups: preschoolers, school children and all ages. And it consists of four main sections: Programs, Music, Learn and Explore. But this project has not been without problems and has faced controversy because the algorithm has allowed videos with inappropriate content to pass.

So it does not seem that making alternative versions for the little ones is the definitive solution, however some are determined to keep trying .

From now on, accounts under 16 years of age on Instagram will be private by default

Instagram has also wanted to experiment in this field. According to statements by the CEO of the platform, Adam Mosseri, to BuzzFeed News, the company is working on developing a version of the popular photo-sharing application for children under 13 years of age .

Mosseri himself wrote on Twitter in response to another user that “kids are increasingly asking their parents if they can join apps that help them keep up with their friends.” “A version of Instagram where parents are in control, like we did with Messenger Kids, is something we are exploring. We will share more in the future, “he added. As we said, current Instagram policy prohibits children under 13 from accessing the platform .

Unsurprisingly, this idea has generated a lot of controversy , not only with regard to children’s privacy, but also at the level of legality. A total of 35 organizations and 64 individual experts came together to get Facebook to abandon this plan to create a version of Instagram for children under 13, a project they describe as “great risk” for young users.

This international coalition of public health and child safety advocates raised concerns with Facebook executives about the effects such a platform could have on privacy , screen time, mental health, self-esteem and pressure. commercial in minors.

As Mosseri himself points out, this is not the first time that Facebook has proposed a platform just for children: it has already done so with Messenger Kids, the children’s version of its chat . The differences with its sister application for adults are that it does not have advertising or integrated purchases and the contact list has to be verified by the parents. Already then, parents and educators requested the withdrawal of the app, considering it unnecessary and harmful to minors.

The latest scandal has to do with a news story that has generated much controversy when The Wall Street Journal brought to light an internal report from Mark Zuckerberg’s company called ‘The Facebook Files’ . In it it is clear that Instagram generates many problems related to insecurity, anxiety and lack of confidence, especially among adolescent girls.

“32% of girls say that when they feel bad about their body, Instagram makes them feel worse” , can be read in this document published by the American newspaper, which dates from 2020 – although the company had already carried out a similar study with similar conclusions in 2019-.

Instagram has responded by saying that it is committed to “understanding the complex and difficult problems” of this sector of the population.

How to control the safety of minors on Instagram

As has been seen, the world of social networks is at the fingertips of anyone, even minors. This implies a concern for parents, since children and adolescents are exposed to become victims of harassment, insults or injuries through mobile phones and social platforms.